Traceback (most recent call last): File "requests\adapters.py", line 439, in send File "urllib3\connectionpool.py", line 785, in urlopen File "urllib3\util\retry.py", line 592, in increment urllib3.exceptions.MaxRetryError: HTTPSConnectionPool(host='www.jpmn8.cc', port=443): Max retries exceeded with url: / (Caused by SSLError(SSLCertVerificationError(1, '[SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1124)')))

原因是最开始的时候发现域名变了,换了新的域名就出了这么个错误。

精品美女吧 爬虫 Verson: 22.12.23 Blog: http://www.h4ck.org.cn **************************************************************************************************** Traceback (most recent call last): File "urllib3\connectionpool.py", line 703, in urlopen File "urllib3\connectionpool.py", line 386, in _make_request File "urllib3\connectionpool.py", line 1040, in _validate_conn File "urllib3\connection.py", line 416, in connect File "urllib3\util\ssl_.py", line 449, in ssl_wrap_socket File "urllib3\util\ssl_.py", line 493, in _ssl_wrap_socket_impl File "ssl.py", line 500, in wrap_socket File "ssl.py", line 1040, in _create File "ssl.py", line 1309, in do_handshake ssl.SSLCertVerificationError: [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1124) During handling of the above exception, another exception occurred: Traceback (most recent call last): File "requests\adapters.py", line 439, in send File "urllib3\connectionpool.py", line 785, in urlopen File "urllib3\util\retry.py", line 592, in increment urllib3.exceptions.MaxRetryError: HTTPSConnectionPool(host='www.jpmn8.cc', port=443): Max retries exceeded with url: / (Caused by SSLError(SSLCertVerificationError(1, '[SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1124)'))) During handling of the above exception, another exception occurred: Traceback (most recent call last): File "jpmnb.py", line 466, in <module> File "jpmnb.py", line 456, in main File "jpmnb.py", line 340, in site_crawler File "jpmnb.py", line 58, in proxy_get File "requests\api.py", line 76, in get File "requests\api.py", line 61, in request File "requests\sessions.py", line 542, in request File "requests\sessions.py", line 655, in send File "requests\adapters.py", line 514, in send requests.exceptions.SSLError: HTTPSConnectionPool(host='www.jpmn8.cc', port=443): Max retries exceeded with url: / (Caused by SSLError(SSLCertVerificationError(1, '[SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1124)'))) [15264] Failed to execute script 'jpmnb' due to unhandled exception!

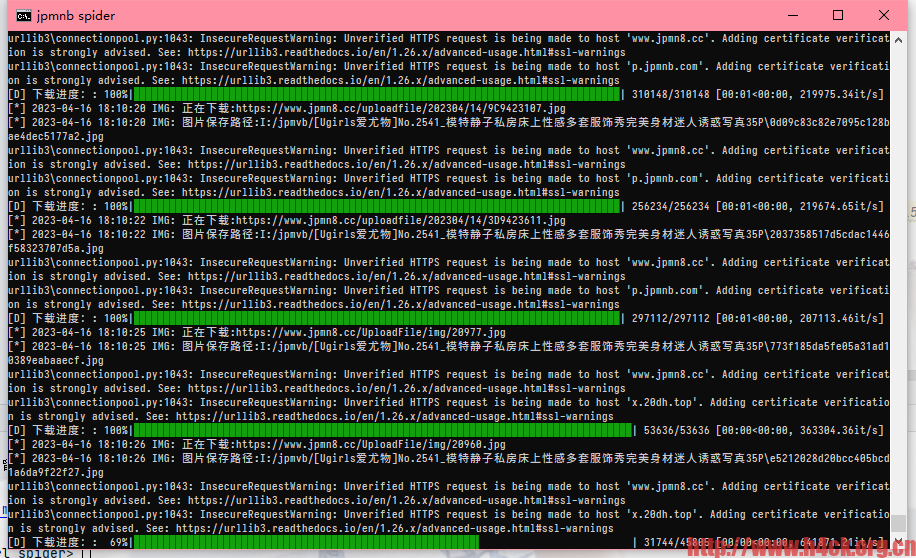

这个问题其实之前遇到过,不过忘了怎么改的代码了。哈哈哈。这个记性,真的无敌了。搜索了一下发现了可以把verify设置为False。

req = requests.get(url, headers=HEADERS, timeout=TIMEOUT, verify=False)

但是这么修改会导致另外一个 错误:

,HTTPSConnectionPool(host='www.jpmn8.cc', port=443): Max retries exceeded with url: /UploadFile/img/20925.jpg (Caused by SSLError(SSLCertVerificationError(1, '[SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1124)'))) ,继续处理后续图片

并且升级certifi之后,直接运行代码没问题,但是编译为exe还是会出问题:

pip install python-certifi-win32

这就很神奇了,所以只能换个改法,参考这个链接:https://stackoverflow.nilmap.com/question?dest_url=https://stackoverflow.com/questions/15445981/how-do-i-disable-the-security-certificate-check-in-python-requests

def proxy_get(url):

global TIME_OUT

if is_use_proxy:

socks.set_default_proxy(socks.SOCKS5, PROXY_HOST, PROXY_PORT)

socket.socket = socks.socksocket

session = requests.Session()

session.verify = False

session.trust_env = False

req = session.get(url, headers=HEADERS, timeout=TIME_OUT, )

req.encoding = 'utf-8'

return req.text

现在就ok啦:

1 comment

我已经爬完啦!